NOTE that this code has been updated to support iOS6 and the 4 inch retina screen

Apple shakes it up – sort of

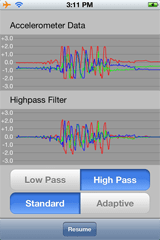

The App that I am currently working towards will use some accelerometer based gestures in order to configure some of its aspects in a fun way. So in looking around for example code that shows the response from the accelerometers I of course quickly found Apple’s sample App – AccelerometerGraph.

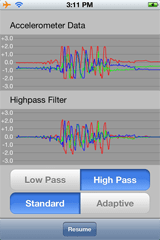

In my mind this App provides only the bare minimum of information and configuration in displaying the accelerometer data. I also think the actually graphing of the traces is not the best as:

- You can’t tell what axis is represented by which trace

- By grouping the raw data into one graph and the filtered data into the other graph you are really grouping unlike data together

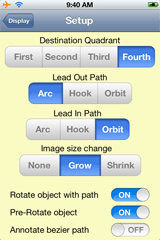

But that’s my opinion. Here is a screen shot of AccelerometerGraph so you can form your own opinion:

After playing around with this App for a bit I decided that I wanted a test bed with a bit more flexibility and one that also conformed to my ideas of data display bets practices. Hence the idea for Shake was born.

We can re-build it

My design goals for my improvement on Apple’s App included:

- Split the graph into 3 traces – one for each axis

- Each graph would display raw, filtered and RMS values of the data with color coded traces

- Be able to select input data from the actual accelerometer input as well as three other calculated data sources: sine wave, step and impulse

- Implement the same filters as Apple, but also implement additional filters of my own choosing

- Calculate the RMS values of the filtered data over a moving window of arbitrary length

- Detect when the RMS value has exceeded a threshold value for a contiguous number of samples

- Be able to easily configure each axis both independently or all at the same time

- Release the source code of the resultant program (see right at the end!)

I managed to achieve all of this with the exception that I did not implement the adaptive aspect of Apple’s filters. I’m going to have to admit that when I got around to doing this I realized that my overall architecture was not conducive to doing so in an efficient way – and I was feeling way to lazy to re-engineer the needed changes!

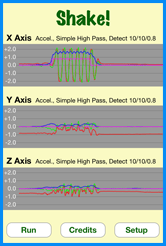

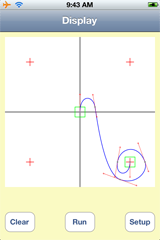

With all of that said, revealed here for the first time are the beautiful images of my latest creation!

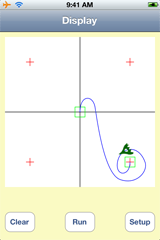

Step right up .. pick your Axis

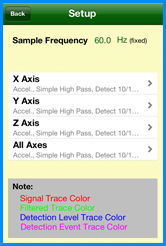

When enter the setup for Shake! the first screen you are presented with is for the over-all configuration of each axis as well as the “All Axes” choice. By selecting a single axis, you will head down the path of configuring only that one axis. The “All Axis” choice is special in that any configuration choices made here will be copied to the other 3 axes – however you will need to confirm this action before the copying occurs.

This screen also indicates that the sampling frequency of the overall Shake! system is fixed, and not changeable. This constraint comes about because the additional filters I included are designed to operate at a fixed sampling frequency – thus if that frequency is changed then the filters will need to be redesigned. So I had the choice of either including the filter design software that I used (and more of that later) or in being lazy and fixing the sampling frequency to what Apple used for AccelerometerGraph (which is also fixed!)

Finally this screen shows the meaning of each trace color used on the graphs on the main screen:

- Red – the raw signal used for this axis

- Green – the output from filtering the raw signal

- Blue – the RMS value of the filtered signal

- Pink – the detection of the RMS level exceeding its threshold

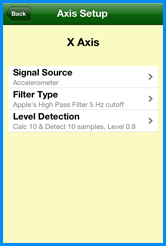

Axis Of Setup

For each of the axes the configuration choices are split up into 3 main sections:

- Signal source

- Filter Type

- Level Detection

And that’s about all you need to know!

Fresh Signal Sources! Get Your Fresh Signal Sources!

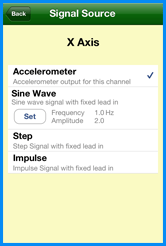

Within the Signal Source setup, there are 4 choices that can be made:

- The actual data from the accelerometer for the axis

- A calculated Sine wave generator, for which you can set the amplitude and frequency (within limits)

- A calculated step generator, for which you can configure nothing!

- A calculated Impulse generator, again for which you can’t configure anything

The limits of the sine wave generator are that the amplitude has to be between 0 and 2g, and the frequency has to be between 0 and 30 Hz (half the Nyquist frequency for the system in order to eliminate reflections in the frequency domain).

In addition, all of the calculated signal sources output a fixed number of zero samples in order to let the filtering settle down before it starts to process the real data.

Within the Shake! code, the calculated signal generators were implemented in the JdSineSignal, JdStepSignal and JdImpulseSignal classes.

Filtering life’s little bumps

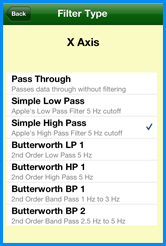

In order to satiate my desire for testing different filters with Shake!, I built in 7 different types:

- Pass Through – actually a “non-filter”

- Apple’s 5 Hz Low Pass 1st order filter

- Apple’s 5 Hz High Pass 1st order filter

- Butterworth 5 Hz Low Pass 2nd order filter

- Butterworth 5 Hz High Pass 2nd order filter

- Butterworth 1 to 3 Hz Band Pass 2nd order filter

- Butterworth 2.5 to 5 Hz Band Pass 2nd order filter

Implementing Apple’s filters allowed me to compare the response of Shake! with that of AcclerometerGraph and helped me eliminate a few bugs that I had missed.

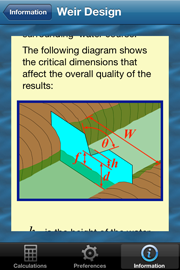

The interesting filters are the Butterworth ones. For people not versed in signal filtering a Butterworth Filter is a particular type of filter that has a flat frequency response in the pass band. There are several different types of basic filter configurations of which a Butterworth filter is just one example. Other filter types (for example) are the Bessel Filter, Chebyshev Filter and Elliptic Filter each of which have different signal characteristics. However Butterworth filters were chosen for Shake! by a purely arbitrary decision – in fact any type of filter would probably have been suitable with what I am doing in Shake!

In their implementation all digital filters come down to a simple equation of the sum of coefficients multiplied by delayed signal values. The only difference between Butterworth, Bessel and etc are the choice of the coefficients and the where in the scheme of things that these coefficients are applied. The most general equation for all digital filters would be something like:

y[n] = a.x[n] + b.x[n-1] + c.x[n-2] + .. + A.y[n-1] + B.y[n-2] + C.y[n-3] …

Where n is the current time index, so that n-1 is the previous time index, n-2 is the index before that etc. Thus x[n] is the current input signal, x[n-1] was the input signal last time. And y[n] is the new output, y[n-1] was the output for the previous signal etc etc. a, b, c, A, B, C are simple, fixed numerical coefficients. The trick to digital filter design is to pick the correct coefficients for the filter design that you want.

I’m not going to go into how to design a digital filter – that’s a whole university level course in itself, and something I did myself a long long time ago. What I can do though is to point you all at the filter design software that I found online.

The software was designed by Tony Fisher, a University of York lecturer in Computer Science, who unfortunately passed away several years ago. However his software can be found (in an interactive web page, and also for down-load) at Interactive Digital Filter Design.

Using this software is a breeze – all you need to do is to select the type of filter you want, the system sampling frequency and the frequency breakpoints of the filter. The software then pops out all the design details plus a C code template for coding the filter directly (and that minimizes multiplications). In addition it also generates a frequency and phase response graph for the filter.

So using the 5 Hz High Pass Butterworth filter as an example, the software generated this pseudo C code :

#define NZEROS 2

#define NPOLES 2

#define GAIN 1.450734152e+00

static float xv[NZEROS+1], yv[NPOLES+1];

static void filterloop()

{ for (;;)

{ xv[0] = xv[1]; xv[1] = xv[2];

xv[2] = next input value / GAIN;

yv[0] = yv[1]; yv[1] = yv[2];

yv[2] = (xv[0] + xv[2]) - 2 * xv[1]

+ ( -0.4775922501 * yv[0]) + ( 1.2796324250 * yv[1]);

next output value = yv[2];

}

}

Within Shake! I created a a custom class JdGenericFilter that would take the details from one of these filter designs as a template and encapsulate all of the processing needed to cover any filter design up to a 10th order filter. For example my template for the above filter is:

const static DigitalFilterTemplate butterworth2OHP5Hz = {

/* Tag */ 2,

/* Title */ "Butterworth HP 1",

/* Description */ "2nd Order High Pass 5 Hz",

/* Filter Class */ kFilterClassHighPass,

/* Sample Frequency Hz */ 60.0,

/* Corner Freq 1 Hz */ 5.0,

/* Corner Freq 2 Hz */ 0,

/* Freq 3 Hz */ 0,

/* Order */ 2,

/* Gain */ 1.450734152e+00,

/* Number of Zeroes */ 2,

/* Number of X Coeffs */ 3,

/* X Coeffs */ { 1, -2, 1 },

/* Number of Poles */ 2,

/* Number of Y Coeffs */ 2,

/* Y Coeffs */ { -0.4775922501, 1.2796324250 }

};

And the actual filter is instantiated and used by code like

JdGenericFilter* filter = [[JdGenericFilter alloc] initFilter:butterworth2OHP5Hz];

..

..

double input = ...;

double output = [filter newInput:input];

The big caveats with this filter class are:

- The code is no where near the most efficient code in the world and should not be used for a real world digital filter.

- If you change the sampling frequency, then you HAVE to change the filter design.

Finally, I implemented Apple’s filters in the JdSimpleLP and JdSimpleHP classes.

Crossing the (RMS) threshold

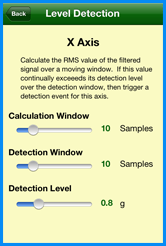

Filtering the signal is just the first part of the battle. Detection of when the signal has exceeded a threshold is the next part – for which the Level Detection setup defines how this is done.

The basis of the Level Detection is to simply calculate the RMS value of the filtered signal over a rolling sample window, and then indicate a trigger when that level has exceeded a threshold for a set number of samples. The setup for the Level Detection reflects these requirements and allows you to independently change all three variables.

The RMS (or Root Mean Square) value of a signal is simply the square root of the mean value of the sum of the squares of the input values. Thus it is useful for detecting the absolute level of a signal that varies in sign (i.e an acceleration). By calculating the RMS value over a window of samples, you can tune how much history of the signal is used – the shorter the window, then the shorter the history.

After calculating the RMS value, its value is compared against a fixed threshold value. If the RMS value continually exceeds that threshold for a fixed number of samples, then a trigger is generated which causes the associated trace on the graph to change. For convenience the trace changes to the trigger level when such an even occurs.

Within the Shake! code, the RS detection is implemented in the JdRMS class.

And You can build it too

Once again I am releasing my source code to GitHub under a BSD-3 style license, so you are free to use it however you like as long as you acknowledge where it originated from. And if you do use any part of it please drop me a line to let me know!

You can download the source code for Shake from GitHub at https://github.com/JoalahDesigns/Shake

Até o próximo blog …

Hope you all enjoy this latest installment.

Peter